What is big O?

What's up, everyone!? 🤙 Today we’ll dive deeper into time complexity and the big O notation. There are a number of factors that have an impact on the time complexity, in other words how fast our application would be.

There is one important thing we have to keep in mind. There are two different types of complexity: Space and Time. The first one is related to how much memory our application uses, the second one describes how many operations our application executes (we must keep in mind, that JavaScript as a language is single-threaded so the overall number of operations is important but also the time complexity of particular operation matters).

Today we’ll talk about Time Complexity. Or, rather, something more, that can effectively describe our Time Complexity - big O notation.

Easily speaking, the big O notation allows us to estimate if our code is fast or slow. Well, “fast” and “slow” are not enough, in most cases, we should look at how our algorithm will behave on the scale.

What if 1000000000 users will open our website (ok, probably even

Netflix isn’t prepared for those types of numbers 😂 ).

What if our loop has to handle 1000000000 elements in our array?

What if this array would have 100 nested arrays inside?

What if I stop giving those ridiculous examples? 🧐

In today’s post, we’ll answer all these questions! Ok, so let’s go through all of the most popular complexities.

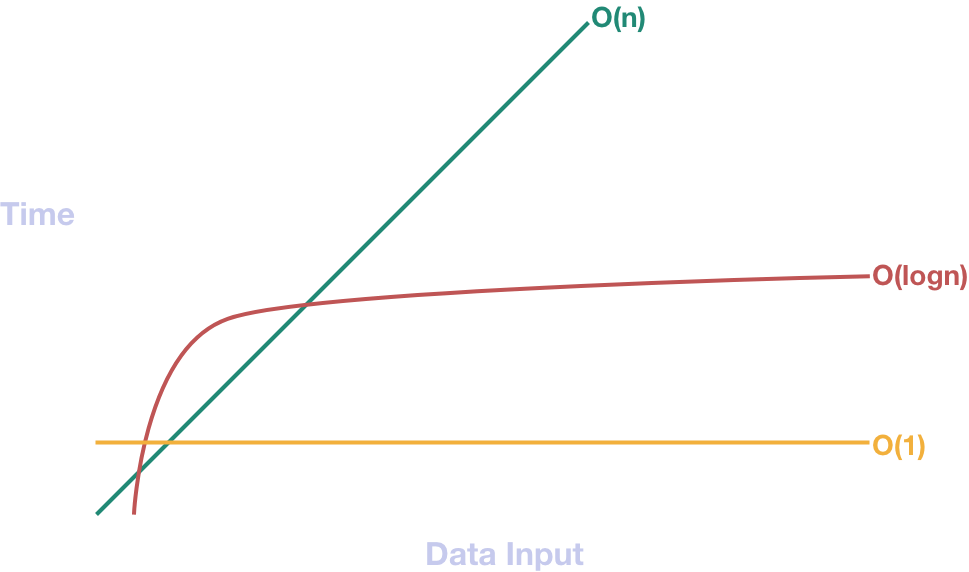

Constant - O(1)

The easiest to understand (and the fastest!) is the constant order. What does it mean? Our operation will ALWAYS take the same amount of time. This is the most desirable type of order because it’s the most predictable. We always know how our algorithm would behave and how can it affect our performance. We also don’t have to write complicated test cases because we know that every single one will always work the same way! Ok, so you probably asking which kind of operations are O(1)? These ones, which can reach the appropriate memory area without the need for searching.

Examples:

array[2] // give me this third element of an array

object.property // give me this property of this particular object

Every primitive operation like sum, subtraction, etc.

But there are some tricky ones. What about shift operation? Theoretically, all it does is removing the first element of an array. So you might think that our intelligent thread knows exactly where to go. Although it actually removes only the first element, it also has to change the order of all others. The second element will be first, third will be second, etc.

Linear - O(n)

In this case, the number of operation that has to take place is proportional to the number of inputs. If they are the same, the complexity would be O(n), if they are two times larger, it would be O(2*n). It doesn’t matter that much because the scale remains the same. This complexity is obviously slower than the constant one but at the end of the day, it’s pretty common. I mean, probably every website has some operations that have this complexity. The simplest and easiest to understand the form of this one is the loop. ForEach, Map, For, While, there are many different types but at the end of the day they all work the same way.

Logarithmic - O(logn)

Ok, so this one sounds interesting. All you need to know is, in this case, the number of operations is decreasing when the number of your inputs grow. Imagine the loop which in the first iteration you have to proceed 100 operations, in the second one 50, in the third 25, etc. The fraction doesn’t matter that much, because again - the only thing that matters is scaling. As you may imagine this one is pretty good because it grows very slowly. It means, it’s better than the linear one but worst (slower) than the constant one. Ultimately this is the one that is, the most desirable among all developers.

Big-O complexity chart

Ok, so now, when we’ve described all good ones (constant, logarithmic) and all average ones (linear), let's talk about one of which you should avoid in many cases.

Quadratic/Cubed - O(n²)/O(n³)

There isn’t that much to talk about. It’s just a loop inside a loop. That’s it.

Every time, when we put a loop inside a loop our complexity is multiplied by n. So for example:

array.map(a => a.map(b => b.map(c => c.prop)))

would have the complexity of n³.

As you probably can imagine this approach of nesting loops can put you in some serious problems if it’d get out of control.

That’s why we should always be aware of what we’re putting inside a loop.

Sometimes we don’t even realize that we’re creating the quadratic complexity. Take look at this example:

const isGreaterThan = value => element => ( element > value );

array.map(element => element.find(isGreaterThan(10)));

What with this example? Theoretically, we have just one loop (map) but notice, that the find function works exactly like a loop. It doesn’t just point this particular value (it’d be a constant complexity then).

It literally goes from first to the last index searching for this particular value. What if this value would have the last index?

Remember, in terms of the big O notation we always look at the worst possible case. You must always remember to pay more attention when you deal with an array inside a loop.

Some of the tricky functions:

- every

- filter

- find

- includes

- reduce

Especially you should pay attention to array sorting algorithms. Bubble sort, selection sort, quick-sort in the worst-case scenario can cost you a lot of time. In many cases, it is more effective to implement an algorithm based on the recursion (although it’s better in terms of time complexity, space complexity grows).

There also are some totally sick complexities like O(2ⁿ) or O(n!). They are not that common so I’ll not spend much time describing it. I just want to mention the real-life example (just for fulfilling your curiosity). They’re basically all brute force algorithms when you want to find the particular value testing every possible combination (usable if you want to break a password).

Summary

That’s it for today. At the beginning of this post, I've mentioned that big O notation is something only related to the Time Complexity. Sike! 😜 That’s not entirely true, it’s used to describe all complexities, but it’s mostly used in terms of time.

I strongly encourage you to check out this website: bigocheatsheet.com